Accurate visual localization in dense urban environments constitutes a fundamental task in photogrammetry, geospatial information science, and robotics. While imagery is a low-cost and widely accessible sensing modality, its effectiveness on visual odometry is often limited by textureless surfaces, severe viewpoint changes, and long-term drift. The growing public availability of airborne laser scanning (ALS) data opens new avenues for scalable and precise visual localization by leveraging ALS as a prior map.

The benchmark offers:

- A new large-scale dataset that combines ground-level imagery from mobile mapping systems with ALS point clouds.

- Accurate 6-DoF ground-truth image poses achieved by registering mobile LiDAR submaps to ALS data using ground segmentation and façade reconstruction, followed by multi-sensor pose graph optimization.

- A unified benchmarking suite for both global and fine-grained I2P localization.

Data Access

The point cloud data, images, ground truth poses, and tools are here:

https://drive.google.com/drive/folders/1iDc9rFmDA_FBUS-nj9ep-1pLyWfh0lp5?usp=sharing

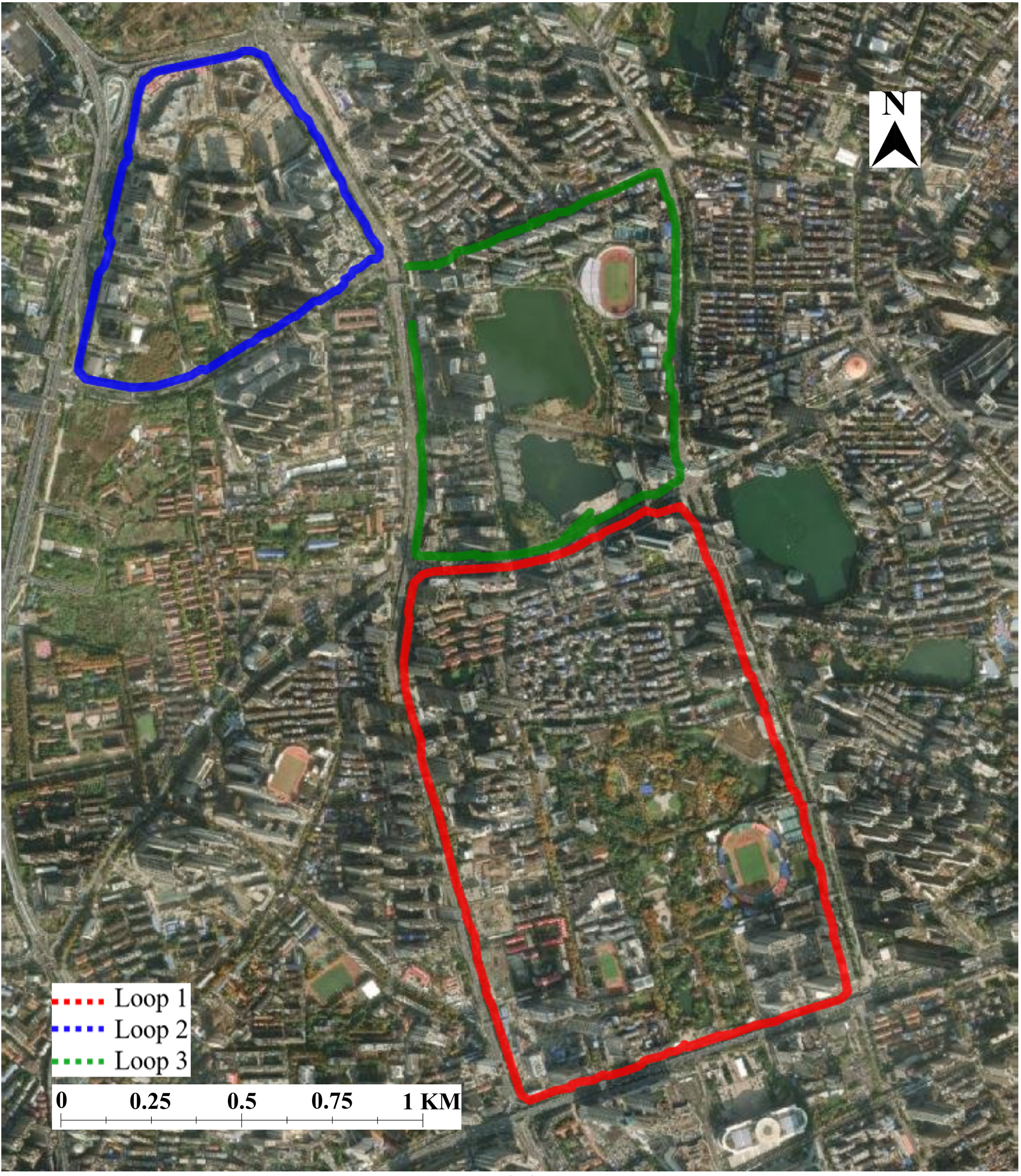

Figure 1. Overview of the trajectories.

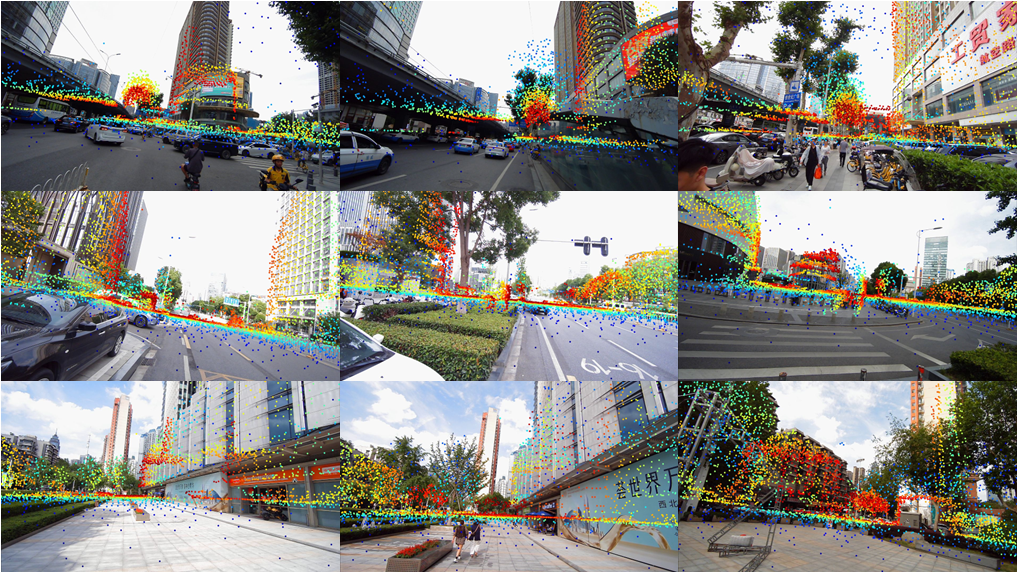

Figure 2. Projection of ALS point clouds to images.

Table 1. Number of image-ALS point cloud pairs

Dataset |

Pairs |

Wuhan Loop 1 |

8526 |

Wuhan Loop 2 |

5206 |

Wuhan Loop 3 |

5663 |

Citation

If you use this benchmark in your research, please cite the following paper:

@article{yang2026agi2p,

title={AGI2P: Benchmarking Aerial--Ground Image-to-Point cloud localization with a large-scale dataset},

author={Yang, Yandi and Li, Jianping and Liao, Youqi and Li, Yuhao and Niu, Ruizhe and Zhang, Yizhe and Dong, Zhen and Yang, Bisheng and El-Sheimy, Naser},

journal={ISPRS Journal of Photogrammetry and Remote Sensing},

volume={236},

pages={22--36},

year={2026},

publisher={Elsevier}

}