In 2014 ISPRS has introduced the so called Scientific Initiative to support projects of interest to the ISPRS community. Call are normally launched in autum of evenly numbered years. Details of the regulations an be found at http://www.isprs.org/documents/orangebook/app9a.aspx.

Reports of previous calls are available at

ISPRS Scientific Initiatives 2025

As a result of the competition held in the autumn of 2024, ISPRS Council has approved the following five SI2025 projects for funding. Council would like to thank all applicants who responded to this Call for Proposals. The following provides summaries of these projects together with details of their principal investigator(s) and co-investigator(s):

A forecasted surface velocity database for cities of the Mediterranean southern coast to assess coastal displacement contribution to shoreline change by 2030

PIs: B. Mohamadi, Shaoxing University, China

CoIs: T. Balz (Wuhan University, China), T. ElGharbawi (Suez Canal University, Egypt), F. Chaabane (SUP’COM, Tunisia), K. Hasani (Algerian Space Agency, Algeria), L.-M. Rebelo (Digital Earth Africa, Kenya)

Coastal erosion is a serious problem facing many African coastal countries. It results in the degradation of nearshore assets and is projected to drive massive population displacement from the African coasts in the near future. The estimated annual losses from this problem, which impacts 270 million people living on African coasts, amount to about 7 billion USD. While some researchers only focus on detecting shoreline dynamics over time, they neglect the coastal surface displacement factor that could impact their future forecasts of coastal inundation and shoreline change. This project intends to develop a database for InSAR measurements of coastal vertical displacement now and forecast future land subsidence in coastal cities by using deep learning. This database will enable researchers to investigate the current and future contribution of coastal subsidence to local sea level rise forecasting. The project will focus on the main African cities located at the southern coast of the Mediterranean Sea, from Al-Arish in Egypt at the east to Tangier in Morocco at the west. Based on the Digital Earth Africa (DE Africa) report on African shoreline changes, this region experiences an erosion rate of 1.58 meters annually on average. This database will help researchers assess the contribution of coastal area subsidence to coastal erosion in northern Africa.

It's crucial to detect and forecast the vertical motion of coastal areas in order to identify those that are sinking and protect them from potential marine surges and floods. Detecting uplifting land is also crucial because it lowers sea levels and encourages the migration of coastlines toward the sea. Interferometric synthetic aperture radar (InSAR) has demonstrated its accuracy in monitoring surface displacement on a large scale and with a high spatial resolution without requiring the installation of permanent stations. This makes it a cost-effective method for tracking the earth's surface displacement. On the other hand, deep learning has proven its capability to forecast future trends based on the time series measurements of the studied phenomena. Deep learning models such as ResNet, ResCNN, and InceptionTime showed remarkable capabilities for time series forecasting in different remote sensing applications.

The objectives of this project are to analyse the surface displacement of 27 major cities in North Africa, decompose vertical displacement based on the InSAR surface displacement results, forecast subsidence levels in the near future, and identify areas of current and future high subsidence risk and their potential impact on shoreline retreat. The produced database will be freely accessible to everybody through the Digital Earth Africa Platform.

Final Report »

ISPRS benchmark on 3D point cloud to model registration (PC2Model)

PI: M. Maboudi, Technical University of Braunschweig, Germany

CoIs: K. Khoshelham (University of Melbourne, Australia), Y. Turkan (Oregon State University, USA), K. Mawas (Technical University of Braunschweig, Germany)

Accurate registration of point clouds to 3D models is critical in a variety of fields, including but not limited to progress monitoring of construction sites, precise mapping, digital 3D printing, and virtual/augmented reality.

Point cloud (PC) data, often obtained through LiDAR, photogrammetry, or structured light scanning, captures detailed geometric information about physical environments. However, aligning these point clouds with pre-existing 3D models—typically generated through computer-aided design (CAD) or other modeling techniques—remains challenging. Most current 3D registration methods, including iterative closest point (ICP) and recent learning-based approaches, focus specifically on point cloud registration and depend heavily on point-wise correspondences. This reliance limits their effectiveness in handling registration tasks across multimodal datasets. Registration must overcome several challenges, such as data sparsity, noise, clutters, and occlusions. This proposal aims to address these challenges by providing a benchmark dataset. A key component of this proposal is the justification for using simulated datasets as a training and evaluation resource. Simulated datasets offer several advantages in point cloud to 3D model registration. First, simulation allows for precise ground-truth alignment between point clouds and 3D models, a key requirement for accurate algorithm assessment. The availability of ground-truth correspondences between the simulated point cloud and the model can significantly enhance the quality of the registration algorithm, providing clear metrics for error evaluation and algorithm improvement. Second, simulations can vary object configurations, point densities, and noise levels systematically, presenting the algorithm with a comprehensive array of scenarios that mirror real-world complexities. Such variations are invaluable in training deep learning-based methods, which require large and diverse datasets to avoid overfitting.

In this proposed dataset, the simulated dataset will be supported also by real-world dataset that enables focusing on developing and benchmarking registration algorithms using both simulated and real-world datasets. This combined dataset aims to provide a diverse and representative resource that can be leveraged in training machine/deep learning models for various 3D point cloud processing tasks, with a focus on improving performance in 3D modeling and registration. Employing the provided dataset, researchers can evaluate conventional optimization-based methods, such as ICP, and explore the potential of machine learning approaches to enhance registration accuracy and efficiency. It would be also possible to validate with real-world data to confirm that the simulated data’s diversity successfully enhances real-world performance. This hybrid approach of simulation-based training followed by real-world validation promises to deliver a robust, generalizable registration solution.

In conclusion, the benchmark datasets offer a controlled, scalable, and cost-effective means of training and evaluating registration algorithms. By using simulations to address the limitations of real-world data, this research aims to advance the state-of-the-art in-point cloud to 3D model registration, paving the way for more precise and efficient solutions in fields that rely on the integration of physical and digital spatial information.

Final Report »

International contest of automated individual-tree delineation from close-range point clouds

PIs: Liang, Wuhan University, China

CoIs: Y. Wang (Wuhan University, China), H. Qi (Wuhan University, China)

Individual trees are basic elements in forest environments. Their size, distributions, and structures play essential roles in biophysical activities. The quantitative description of three-dimensional (3D) forest structure is, however, extremely difficult because of the complexity and heterogeneity of forest systems. Measurements of 3D structure using conventional methods in the field are laborious, imprecise and often impractical. The recent development in close-range sensing technologies significantly enhances the capability of forest investigation, where close-range sensing observes objects at a target-to-sensor distances ranging from a contact or non-contact short range up to several hundred meters.

The past two decades have witnessed extensively studies on the individual tree delineation (ITD) from close-range sensing data. Machine learning (ML) based methods have been widely studied. Current ML solutions have been demonstrated to be able to achieve pretty accurate ITD results in easy forests. When the forest conditions become more challenging, clear omission and commission errors can be expected. Recently, Deep learning (DL) based method have been considered as a new cornerstone of algorithm development that provide feasible solution for accurate forest delineation. Several works have tried to segment forest for individual trees using DL methods.

ML methods rely on expert knowledge and experiences. They may have limited scalability, if the models are not well generalized across different application domains. DL methodologies are data-driven, which necessitate substantial quantities of data for training and testing. A common challenge for the algorithm development is the lack of standard datasets. Overall, the quality of training datasets, e.g., the representativeness and the correctness of annotation significantly impact the performance of the trained model, e.g., the applicability, reliability, and transferability. Both ML and DL require high-quality of annotated dataset for algorithm development. Particularly, for DL methods, this requirement is more critical and urgent.

Among various data sources, point cloud data allows a direct 3D digitization of forest and tree structures and enables an automated estimation of structural parameters at a high level of details and accuracy. Particularly, terrestrial laser scanning (TLS) provides the highest geometric data quality on a plot level. TLS is capable of providing dense points at millimeter level, and its data are usually less noisy in comparison with other data sources, such as mobile and UAV data, owe to the high quality hardware and static acquisition mode. In general, the results achieved from high-quality TLS data can be understood as a benchmark for results from other 3D point cloud data.

This scientific initiative project aims to clarify the current state-of-the-arts of ITD from close-ranging point clouds, to study the strength and limitation of current solutions, and to promote the development of new ML/DL approaches for ITD. An international contest of automated ITD from close-ranging point clouds will be launched. The contest aims to encourage participants around the world to develop their own ITD methods and to demonstrate the method performance in the contest. The contest will provide datasets and references. The results will be evaluated through standardized procedures and the results will be announced after the contest.

Developing food insecurity index and vulnerability information system (FIVIMS) for a tribal district- a case of Alirajpur District, Madhya Pradesh, India

PI: K. Saha, School of Planning and Architecture, India

CoIs: M.R. Delavar (University of Tehran, Iran), B.S.P.C. Kishore (School of Planning and Architecture, India)

Food security remains a pressing issue for marginalized communities in regions like Alirajpur District, Madhya Pradesh, India. This population often faces high levels of malnutrition, food scarcity, and poverty, exacerbated by limited access to essential resources and services. The Public Distribution System (PDS), although well-intentioned, faces several logistical and outreach challenges that hinder its effectiveness. To tackle these issues, integrating innovative technologies such as Geospatial Artificial Intelligence (GeoAI) offers a promising solution.

Alirajpur District, located in the state of Madhya Pradesh, is home to a significant tribal population, predominantly belonging to the Bhil community around 647,653 population (2011 Census of India). Bhil tribe is the third largest tribe in India and second largest in Madhya Pradesh. The Bhils live in dry and less fertile areas in the state. So, they make their houses in the agriculture field itself for protection of the crops. Due to this, their population is scattered. Their main occupation is agriculture. Alirajpur District already struggles with erratic monsoons, and the overreliance on soybean farming exacerbates the issue. The district's fragile ecosystem, characterized by marginal soils and low water availability, is being further strained by the high water demands of soybean production, leading to groundwater depletion and heightened vulnerability to drought. The cycle of low rainfall, high water extraction, and reduced agricultural productivity has trapped Alirajpur District in a state of persistent drought and food insecurity. This study, therefore, aligns with Sustainable Development Goals (SDGs) such as SDG 1 ("No Poverty") and SDG 2 ("Zero Hunger").

This research aims to develop a Food Insecurity Index and Vulnerability Information System (FIVIMS) for the Alirajpur District using Geospatial Artificial Intelligence (GeoAI) tools. The research methodology involves identifying indicators for development of the FIVIMS in the context of Alirajpur District which will be Food Availability, Food Access and Food Absorption. Through exhaustive literature review parameters for each indicator will be identified (refer figure1, the method flow chart) and extensive data collection will be done. Both primary and secondary data collection technique will be used and FIVIMS will be developed. A final map will be generated as a key output which will highlight food-insecure areas, monitor improvements over time, and visualize the correlation between income levels, employment opportunities, and food security. The FIVIMS will reflect the Spatio-temporal food security status and the impact of development interventions. With the help of GeoAI tools, this research is pioneering in its integration of spatio-temporal map analysis, data mining, and data science with traditional socio-economic analysis, creating a comprehensive framework to improve food security and economic empowerment for tribal population in Alirajpur District. By visually representing disparities in resource access, the study provides policymakers with actionable insights for effective, a combination of data-driven and knowledge-driven solutions to enhance community resilience and achieve sustainable development goals in tribal regions.

Air quality from pictures: benchmarking and assessment of image-based methods for particulate matter estimation (AQpictures)

PIs: D. Oxoli, Politecnico di Milano, Italy

CoIs: S. Li (Toronto Metropolitan University, Canada), S. Xu (Beijing University of Civil Engineering and Architecture, China), M. Brovelli (Politecnico di Milano, Italy), F. Pirotti (University of Padova, Italy), A. Moazzam (Politecnico di Milano, Italy)

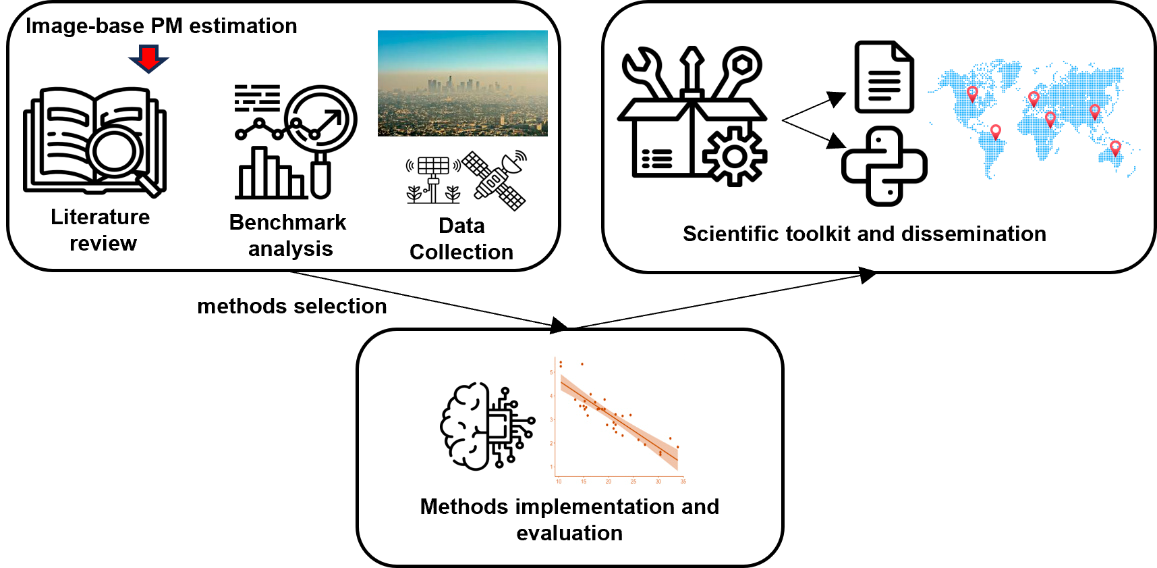

Air quality is an increasing concern for public health, with serious attention surrounding Particulate Matter (PM), a widespread pollutant whose exposure is strongly linked to the burden of respiratory and cardiovascular diseases. Traditional PM monitoring systems rely on ground sensors which provide accurate and regulation-compliant measurements, though they have limited spatial coverage and require costly infrastructure, highlighting the need for complementary monitoring approaches. Given the above, recent studies have demonstrated that visual characteristics of outdoor images, such as sky lighting and colour features, the visibility of distant object outlines, etc. can correlate with ambient PM concentration. Accordingly, different methods have been proposed in the scientific literature to infer this correlation, by offering a potential for PM monitoring through simple images taken from widely available devices like mobile phones and fixed webcams. Since these methods were proposed only recently and have not been thoroughly tested across various geographic areas, shortcomings concerning their accuracy and applicability remain. With this in mind, the AQpictures project aims to carry out a benchmark analysis of available image-based PM estimation methods by implementing the most significant ones and assessing their accuracy against authoritative PM measurements and satellite aerosol products. The workflow adopted to implement and test these methods will be organized into a supporting scientific toolkit that will be made freely available to everyone in the ISPRS community and beyond. The toolkit will include open-source code scripts, analysis-ready sample datasets, and relevant technical documentation which will support further tests, reviews and adaptations of the methods, aligning with the ISPRS commitment to advancing geospatial applications in environmental monitoring. Finally, an open dissemination workshop and a scientific publication will integrate and disseminate the project’s outputs.

Final Report »